Blog Posts

Sync Outlook Or Office 365 With Google

18 January 2021 | Edit Post |

Background

I really wanted to improve my working from home routine and utilise the features of the Google assistance / Google home I have in my home office.

The big issue I had was that the company I work for uses Office 365 and Outlook which are not compatible to link the calendar into my Google home assistant.

Solutions

I had experimented with various ways of sharing the calendar from Office 365 to my personal Google calendar but nothing would work reliably.

Thats when i came across a great open-source project Outlook Google Calendar Sync

This tool can be setup to automatically update a selected Google calendar whenever you change an appointment in Outlook.

My Setup

So, with Outlook running on my work laptop, I installed the Outlook Google Calendar Sync.

Rather than pollute my home calendar with work events, I created a dedicated calendar in my Google account specifically for my work events.

There are some events I didn’t want my Google assistant to announce like work calendar blocks in put in for a Lunch break, but the sync has me covered there with support to exclude events which are in a certain category. - So i was able to make a “No Google Sync” category and place it on the events I didn’t want to sync.

With a setup stating to sync 14 days in the past, and 168 days (approx 6 months) in the future my Google calendar was all ready. My sync is setup to run every 5 hours, but also setup to automatically push when changes occur.

Through the Google assistant settings, I was able to make it look at my new Work Google calendar, so now when I start work I can say “hey google, good morning” and Google will tell me all about my day.

Small Flaw

If I get a meeting invite outside of my work day and I accept it on my phone, the Outlook on my laptop (which is off outside my work day) will not pick up and sync to Google calendar.

I could leave my work laptop on at all times and this would mean that when I accept the invite on my phone, the Outlook on my laptop would ge the event and therefore sync to Google.

Other Notes

For anyone else who has a Google home device in their home office, there is a neat “Workday” feature built into Google assistant now that will allow you to wake up and announce something at set times throughout the day.

This is a great way to remind you to get up and move around (something that I’ve found myself neglecting over the last 10 months).

Fitbit Overview Watch face Launch

18 June 2019 | Edit Post |

Background

I’ve been interested in smart watches for a while now and in the past have attempted to create watch faces and apps for the Pebble.

At the beginning of the year I invested in a Fitbit & started looking for a stats heavy watch face that looked nice and showed progress towards goals.

I came across ANDv2 and whilst it had a lot of the features I was looking for, there were some annoyances with it.

At 1st I raised a couple of issues on GitHub & then tried to reach out to the repository maintainer but was unable to get contact, so I set to work on making my own version of ANDv2.

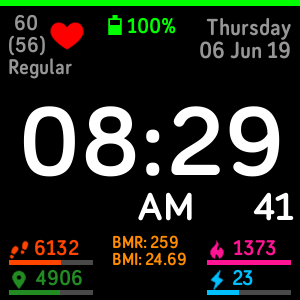

Introducing Overview

Overview is my fully open source and actively maintained stats heavy Fitbit watch face.

The watch face is published into the Fitbit gallery so is easy to download and install onto your watch. Or you can use the handy QR code

It supports all 3 Fitbit customisable watches (Ionic, Versa & Versa Lite) and comes jam packed with customisable options.

The features include:

- 12/24 hour clock support

- AM/PM optional display on 12 hour clock

- Optional show/hide of seconds

- Date with day name

- Heartrate with optional animation & zone display

- Steps

- Distance

- Calories

- Floors

- Active Minutes

- BMI & BMR

- Progress bars for activity goals

- Battery bar along the top

It also has support for the following languages:

- English

- French

- Italian

- German

- Spanish

- Japanese

- Korean

- Chinese (Simplified)

- Chinese (Traditional)

What Does It Look Like?

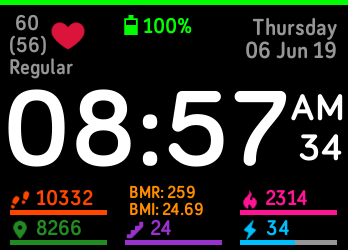

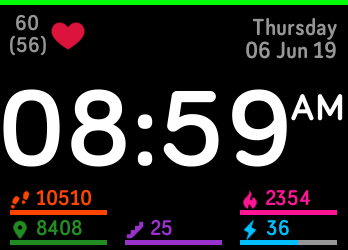

Ionic Device

The standard layout is as follows

The watch face can be cut down to bare minimum information like this

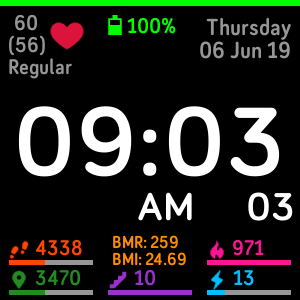

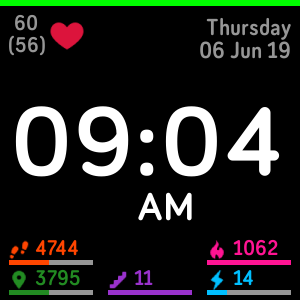

Versa Device

The standard layout is as follows

The watch face can be cut down to bare minimum information like this

Versa Lite Device

The standard layout is as follows

The watch face can be cut down to bare minimum information like this

Cost

What’s best is Overview is completely free to use!

There is a donation link in the Gallery listing

What Next?

I really hope that my watch face gathers some users who suggest additional features, so far, the only request I have had is to add weather information but would be really interested to hear more ideas.

The Technical Details Behind My Blog

28 July 2017 | Edit Post |

Overview

The full scoop on why and how I created my own GitHub pages hosted blog and the technologies I used

Why Go Self Designed?

This started out as a learning exercise.

I have played with GitHub pages before on my Gallifrey project & also played with CloudFlare there too, but it was all based on standard GitHub pages templates and no blogging capability.

I have also used Bootstrap on a couple of work projects, so wanted to experiment and get more familiar with the inner workings of GitHub pages, or rather Jekyll.

I had also never linked or worked with things like comment sections, or social sharing. Both are key to a successful blog, so this would be some interesting learning along the way.

The Design

My idea was simple, keep it clean and minimalist. But when on mobile, really strip down so only the content is on show.

I wanted a gradient background from my favourite HEX colour (#ABCDEF) to white with a white rounded content panel sitting dead centre with a little shadow.

The content panel is the broken down into 4 sections:

- Header

- Content

- Sidebar

- Footer

Header

The header is a little under-utilised at present, I have a very simple title and navigation links.

The navigation links compress into a drop down on mobile to save on screen space which is a nice trick I learnt how to do using bootstraps styling to show or hide different content on different screen sizes.

Content

The content area is quite rightly the largest section, it’s easy to read with black text on white background and it nicely framed with borders on all sides joining other content

Sidebar

The sidebar is only visible on medium or large devices (i.e. phones or portrait tablets will not show it).

I’ve broken the sidebar into 3 vertical sections.

The first has my photo and links to my social networks. These icons are provided by Font Awesome They provide a CSS you can link to and add CSS tags to include little images. With a bit of styling, these become link buttons with a nice hover effect!

The second is the latest 3 blog posts I have made (plus RSS link – another font awesome icon).

The third is a widget with my Twitter timeline (created by Twitter).

The sidebar helps keep the content from looking too wide on larger screens, whilst also providing links off to other useful items.

The Footer

Like the header, this is very underused. I think in the future I may have some additional content to put here, but not sure what just yet!

Blog Posts

Design/Layout

I wanted to keep things how people expect to see blog posts, to up top we have the title, the publish date some tags and a comment count.

This is followed by social sharing buttons provided by AddThis. AddThis enable automatic recommendation of what social sharing to show based on usage, but I’ve gone with the static I choose approach for now. They allow for remote customisation of the buttons on their side which update in real-time & provide metrics on social shares which is kind of cool!

The main content of the post is separated from the social sharing with a horizontal bar top and bottom.

Under the post content is the Disqus. I’ve also never used Disqus before and it was surprisingly easy to add to the posts. A quick sign-up process and a bit of Javascript and we are up and running. Again, Disqus has analytics and comes complete with comment moderations by default

Post List

As with most blogs, there is a standard page with all the posts, I’ve gone for a paginated approach with a maximum of 5 posts per page. This it the bit that took me the longest to get working due to the complexity of Jekyll in this area. There are a lot of tricks you need to know to get it working such as not using perma links and the page must be HTML and not markdown!

The net result though after that effort is a nice thing, I’ve made sure to include the date, tags and comment count on these too, but there is no sharing.

This page also (like the sidebar) links off to my blog RSS feed.

Static Content

I had a couple of pages on my old Wordpress blog talking about me, my open source software and links to other blogs and websites I read/follow.

I’ve migrated these pretty much as was, but plan on sprucing that content up as time goes on as it’s a little outdated looking.

Hosting/DNS/SSL/Caching/Analytics

Hosting

The site is hosted purely on GitHub pages, this is free to use (if the repository is public). It also means that I can get reader updates should I make any silly spelling mistakes (which is almost certain to happen). This was a completely trivial exercise of creating a repository on GitHub.com and adding my pages to it!

DNS

It took me a while to decided exactly what DNS I wanted to get and settled with blyth.me.uk. A CNAME file in the root of my repository and a few DNS records added with my DNS provider and I was up and running!

SSL

You could say that for a site like mine SSL is overkill. But when a service like CloudFlare is offering is as part of their free package you would be a fool not to take it!

All I needed to do was update my DNS nameservers over to CloudFlare, they migrated the entries I had already created with the DNS provider. Having turned on “Always HTTPS” they even handle the automatic redirects for me!

Caching

Linking nicely from SSL, CloudFlare also provide free caching. This doesn’t support HTML files as standard, but you can create a page rule to state all content on the domain should be cached. I’ve set this up with a 2-day cache in CloudFlare for all content.

This has caught me out once when making changes but a quick cache purge on their website and I could see my changes instantly!

Analytics

The WordPress site I came from had analytics all built in. I didn’t want to lose this visibility, so have added Google Analytics to my site. I already had a Google Analytics account setup from Gallifrey, so this is just a second “Property”

SEO

Jekyll has a neat plugin called Jekyll-SEO. This takes some configuration information on the site and adds a whole load of meta content into the HEAD of all pages. This helps improve SEO and means links on social networks get nice looking cards!

The Cost

The cost was something I wanted to keep as low as possible.

I think I have achieved this, my only cost being my domain name.

A win for hosting yourself I would say!

New Blog

25 July 2017 | Edit Post |

Introducing my new blog designed by myself (no nasty templates!) and hosted in GitHub Pages

My old Wordpress blog is here and over the coming days I will be migrating selected posts here with their original publish dates & updating the WordPress site to link here, so watch this space.

The site is almost complete, there are still a few things I need to update such as:

- Content updates

- Custom 404 page

- Social shares on posts

- Auto tweet from my twitter on new posts

- Custom domain

- CloudFlare/SSL

- Maybe more things…

Migrating Multiple Folders Between GIT Repositories

07 June 2017 | Edit Post |

The Brief

I recently had a situation where I needed to move parts of a GIT repository over to a new repository but wanted to keep all the history from the original repository.

The Research

After a bit of searching on google and finding many resources on “the best way to do this,” I ended up hitting a wall. The overall thought patterns I found looked good, but there was no one size fits all “tutorial” for how to do this.

The Eureka Moment

After muddling through (and deleting my local copies of the repository many times!) I finally managed to achieve what I wanted.

A pull request in each repository one deleting the folders with my code, and the other adding them complete with all their history (that second pull request looks very scary with a lot of commits)

But how did I do it I hear you ask…well…this post aims to make this easier for everyone, so keep reading!

The Process

The process documented below states all the git bash commands you’r going to need.

Start from any folder you have on disk, we going to leave it clean at the end :)

At various parts in the process, you will need to modify the command to put your own variable content in. These are shown with text in brackets.

Get Your Source/From Repo

To prevent screwing up any local copies of the repo you already have, I suggest a clean pull and remove remote.

git clone (URL-to-from-repo) FromRepo

cd FromRepo

git remote rm origin

Clean Your Source/From Repo

Since we only want the history to contain certain folders, we can run a command to completely remove everything outside of these folders from history - essentially rewrite history with just the bits we want. - This may take some time if you have a lot of history.

After this, we are done with this repo, for now, so move back up to the root folder.

git filter-branch --index-filter 'git rm --cached -qr --ignore-unmatch -- . && git reset -q $GIT_COMMIT -- (folder-paths-space-seperated)' --prune-empty -- --all

git reset --hard

cd ..

Getting You Destination/To Repo

We need a fresh copy of our destination repository again, this prevents us screwing up any local copy we already have!

git clone (url-to-repo) ToRepo

cd ToRepo

Linking Our Repositories

Next, we want to get the Source/From repo into our Destination/To repo onto a branch called “feature/RepoMigrate”

git remote add FromRepo (full-path-of-from-repo)

git pull FromRepo master

git remote rm FromRepo

git branch feature/RepoMigrate

git reset --hard origin/master

Pushing Our Migrated Folders

Now we have all our folders in our repo, we can them into our repository and we are then done with this copy of the repository too.

git checkout feature/RepoMigrate

git push origin feature/RepoMigrate

cd ..

Clean Up Pass 1

So far so good right? - we have got the branch ready to put the important parts of the source/from repository into the new one, so let’s have a tidy up!

rm -rf FromRepo

rm -rf ToRepo

Removing Folders From Source/From Repository

We need a fresh copy of the source/from repository to work with to perform these updates and we also want to be on a branch to perform this clean-up

git clone (url-to-from-repo) FromRepo

cd FromRepo

git branch feature/RepoMigrate

git checkout feature/RepoMigrate

Remove and Commit

For each folder you have migrated you want to remove and commit the removal

rm -rf (folder-path-migrated)

git commit -a -m 'Remove migrated folder (folder-name) as moved to (new-repo)'

Now Push & Tidy Up

You’re almost done! We just need to push and tidy up.

git push origin feature/RepoMigrate

cd ..

rm -rf FromRepo

What Next?

Well, you now have a branch in each repo called “feature/RepoMigrate” one removes the folders and the other adds.

Personally, I raised a pull request onto master for each of these (and had to make some changes to get the CI working correctly in the destination/to repo)